There are two big problems with using LLMs to replace human effort. Both stem from a misunderstanding of the word intelligence. Given the “intelligence” demonstrated by today’s captains of industry (especially those extracting value from society without giving anything back), we can be forgiven for mistaking what it actually is.

On Intelligence

Intelligence as a human or animal phenomenon, is a complex, multilayered and ongoing activity that involves, among many other things, interpreting signals through various biological infrastructures and cognitive processes. For the biological beings that do it, the majority of the activity happens so fast, we are hardly aware of it.

What we call AI is a wonderful invention in its own right, but not intelligence. We should stick to calling it an LLM.

The Faulty Foundation, an Archive of Incomplete Drafts

LLMs were built by scraping as much of human utterance as possible, with limited ability to distinguish the refined from the unrefined. Yes it’s worrying that copyrighted material was used, but the real problem is the foundation. The majority of human utterance is not worth reading.

We use expression and utterance as part of our ever ongoing process to navigate reality and make meaning. Even if it’s put out in forums, emails, journals, etc. the vast majority of it is unrefined at best. We think and operate in incomplete sentences, cobbled together on the fly. That’s the way it is supposed to be. Much of this is far from the end result of our intelligence, it’s the warm-up to it, us stretching our cognitive limbs and clearing our throats.

Then there is a large chunk of human expression that is more refined in a loose sense, but isn’t intended to be noteworthy. It isn’t exactly mediocre, but it’s not our best work. Or maybe it is mediocre, like poor movies, tawdry romance novels, etc. These have their place as well. But we would find university level coursework that intentionally trained you how to produce that laughable.

Neither of those two vast sources of data that were used to build LLMs are actually what you want creating the baseline of human expression or effort. Our sublime work is by default rare. There is no shortcut to producing it.

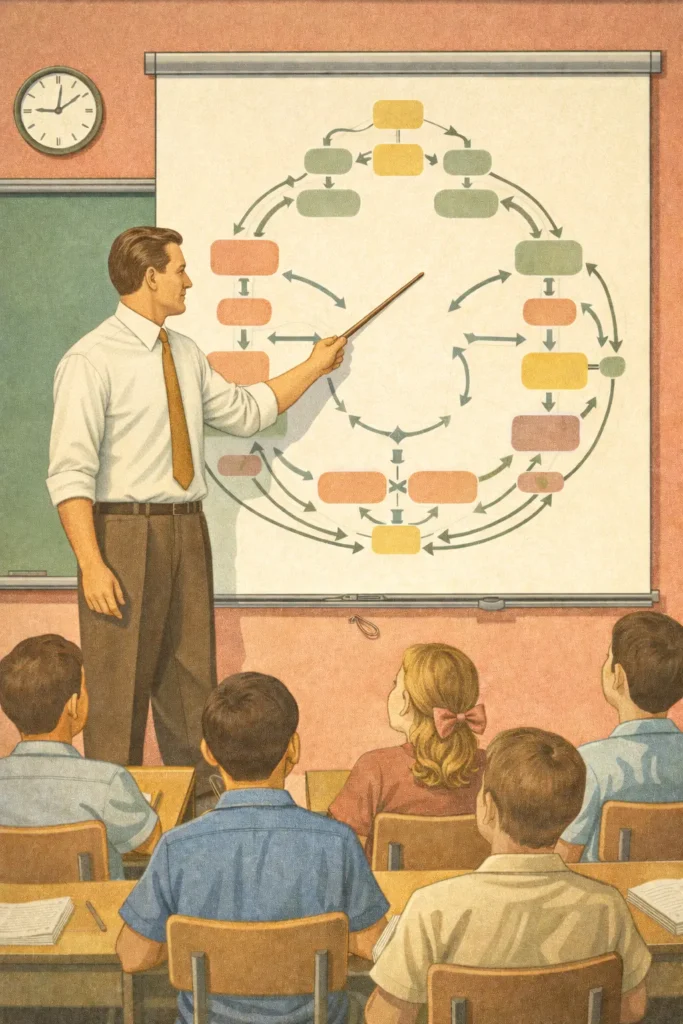

The Learning Curve of the Science of Human Development

The development of LLMs is somewhat parallel to the arc education through the 20th century. For the early part of the era, education involved rote memorization. A student would be lectured by a professor and be expected to recall the information from memory. In the case of literacy and numeracy the methodology involved memorizing multiplication tables and grammatical rules.

As the science of education developed, theories abounded on how a human actually developed mastery in any subject. As a kind of reaction to the drills of rote memorization, a movement that gained popularity in the 70s and 80s was grounded in notions of letting learners figure things out for themselves through exploration. The Whole Language approach was based on the belief that learning to read is a natural process, similar to learning to speak (it is not), and can be acquired through immersion and exposure rather than direct instruction. The theory essentially was that if a learner was exposed to enough input on the topic, they would discover the keys to mastery on their own.

This notion was incredibly naive and assumed a great deal about the subject being learned, the one doing the learning, and the context in which they are learning. Nonetheless it became the primary educational methodology in many countries through the 90s and early 2000s.

During this time, the biological study of the brain was developing, neuroscience. Now education is far more multifaceted. We understand that mastery of a subject involves all sorts of methodologies, but it basically comes down to common sense. You need to systematically and explicitly teach how to think about a topic in a contextually relevant way to each learner.

You can and should use discovery, memorization, and all the tools the human brain is capable of deploying as they are relevant to the specific learner and the topic. But before all that, you need a roadmap of how the brain will learn and use the information. You develop a progression of awarenesses that it needs in conjunction with procedural skills, a blueprint of what skills build on and support others, and a simple process for checking for the measurable development of all of them.

LLM development is sort of in its own Whole Language era.

The builders have yet to learn that osmosis is not a great teacher, or at least it delivers very unreliable and inconsistent results.

LLM development may be entering the phonics stage of education. This is a more systematic and developmentally sound approach but still lacks the individual context of the learner (we’ve since understood that learning is interdependent with context and have learned to adapt to diverse needs and strengths).

But the real problem is that those training LLMs are using a very different tool than a biological brain.

The Biological Brain

Without realizing it our process of making meaning involves filtering input that our nervous system is constantly collecting. Before we are aware of any of it, the nervous system has already run an active, goal-directed filter on incoming signals, suppressing what it expects, flagging what it doesn’t, and passing upward only what is relevant to what we are currently doing or trying to understand. This is not a passive sieve. It is a calibrated, continuously adjusted process, shaped by experience, context, and prediction, and it is already forming our sense of meaning before we’re conscious of it.

The nervous system generates a working model of reality in advance, which is context, and updates that model continuously against what surprises it. In this sense, the world we move through is not discovered but constructed, perception by perception, in real time.

This fantastic process is made possible through a multilayered infrastructure. Our way of making meaning includes internal and external systems, such as distributed cognition, so that we can retain and recreate a wonderfully complex and dynamic context. This is presumed and subsumed into all our thoughts and actions. We sometimes struggle to see it as we are so close to it.

We might not be able to remember where we left our keys, but we know intrinsically their place and importance in our world regardless.

A computer cannot do any of that. In fact, it may never do that (nor need to). We are not computers or machines. Any mechanical or computational metaphor that tries to describe how we think and do is missing the mark. Our intelligence is an internal ecosystem of interdependent infrastructures collaborating nonstop to interpret, form, and create the reality, which is the context, we find ourselves in.

The Dual Problem

So LLMs were built on “intelligence” we don’t want to or need to remember, and definitely don’t want to emulate. Secondly, they cannot retain context like we can. Input is not weighted the same as a human would, shaped by lived experience, relationship, and embodied history. So LLM output will struggle to be truly relevant to us without lots of human interaction.

The second part of the problem is not only a product of the hardware LLMs operate on in comparison to our own, but also a product of how they were trained. If you have been exposed to mostly crap, even when you are told what excellence looks like, you’re still more likely to default to the training pattern (well, not you as you are human and can actually learn and remember context).